|

|

|

|

| Module 0 : Objectives of NPFAT Course |

Network Packet Forensics Analysis Training (NPFAT) provides sufficient knowledge to students on most common Internet Protocols (such as HTTP, IM, Email, Telnet, Video Streaming, VoIP etc), collection, capturing of network packets, analysing and reconstruction of the network packets that contains these protocols, solutions implementation and application as well as some practical case studies. This training course provides the solutions based on Decision Computer E-Detective series of products that will assist the Network Security Manager, Administrator, Security Auditor, Forensics Investigators (Police, Military Intelligence, National Security etc.) and Law Enforcement Officers to preserve network digital evidence and extends the network based crime investigation.

NPFAT course aims to equip Forensics Investigators and Lawful Enforcement Offices with sufficient knowledge on conducting investigation on Internet based or Cyber based criminal. It will provide knowledge on implementing the right solution for collecting, analysing, correlating the Internet data and report them as valid ad legal evidence in the court. Only the right process of investigation will be accepted in term of law of court. The details explanation of various Internet protocols is aimed to provide this investigators with in-dept information and deploy or explain it (the operation and architecture) if required.

Besides, Network Administrator and Security Auditor will learn to analyse various Internet protocols and able to identify the online behaviour of the network users. This allows them to track down and identify users that have occupied most of the company Internet bandwidth for necessary actions. Besides, if there is any dispute or even lost of confidential company information or data through the Internet, the culprit can be identified with the evidence being captured and preserved.

Prerequisites for NPFAT

This training course with hands-on practical session is intended for new users, particularly network administrator, security auditor, forensic professionals and law enforcement personnel who uses Network Forensics Tool or other forensics software to examine, analyse and classify digital evidence.

To gain the maximum benefit from this course, you should meet the following requirements:

- Read and understand the English language.

- Perform basic operations on a computer.

- Have Knowledge in Computer Networking, Wireless Networking

- Have Knowledge in Information, Network and Wireless Security

- Basic Digital Forensics Knowledge would be useful

Course Materials and Software

Trainee will be receiving a hardcopy of the Training Manual and Demo Software CD (30 days) for E-Detective (ED) and E-Detective Decoding Centre (EDDC).

Notes:

For the sample raw data packets analysis screenshots inside this training material, different protocols are colored differently. |

|

|

|

|

|

|

|

| Table of Content |

Module 0: Objectives of NPFAT Course

Module 1: Revision & Concepts of Digital Forensics

1.1 Introduction to Digital Forensics

1.2 Digital Forensics Processes and Procedures

1.2.1 Preparation and Identification

1.2.2 Collection

1.2.3 Preservation, Imaging and Duplication

1.2.4 Examination and Analysis

1.2.5 Presentation

1.3 Digital Forensics Field

1.3.1 Computer Forensics

1.3.2 Network Forensics

1.3.3 Mobile Forensics

1.4 Introduction to Network Packets

1.5 Packet Sniffer and Analyzer Tool

1.5.1 Wireshark

1.5.2 tcpdump/WinDump

1.5.3 Kismet

1.5.4 Ettercap

1.6 Packet Reconstruction Tool

Module 2: HTTP Network Packet Analysis

2.1 Introduction to HTTP Protocol

2.1.1 HTTP Client Connection

2.1.1.1 HTTP Client Web Access Procedures

2.1.1.2 HTTP Instructions/Commands

2.1.1.3 HTTP Response Code

2.1.1.4 HTTP Client Web Access Sample Packet Analysis

2.1.2 HTTP Host/Server Connection

2.1.2.1 HTTP Host/Server Service

2.1.2.2 HTTP Host Equipment Type

2.1.2.3 HTTP Host Operation and Packet Characteristics

2.3 HTTP Upload

2.3.1 HTTP Upload Sample Packet Analysis

2.4 HTTP Download

2.4.1 HTTP Download Sample Packet Analysis

|

|

|

|

|

|

|

|

| Module 1 : Revision & Concepts of Digital Forensics |

1.1 Introduction to Digital Forensics

Digital Forensics is a branch of forensics science pertaining to legal evidence found in computers and digital storage mediums. The goal of digital forensics is to explain the current state of a digital artifact. The term digital artifact can include a computer system, a storage medium (such as a hard disk, USB drive or CD-ROM/DVD-ROM), an electronic document (example: an email message, DOC file, PDF file or JPEG image) or even a sequence of packets moving over a computer network. The explanation can be as straightforward as "what information is here?" and as detailed as "what is the sequence of events responsible for the present situation?" |

1.2 Digital Forensics Processes and Procedures

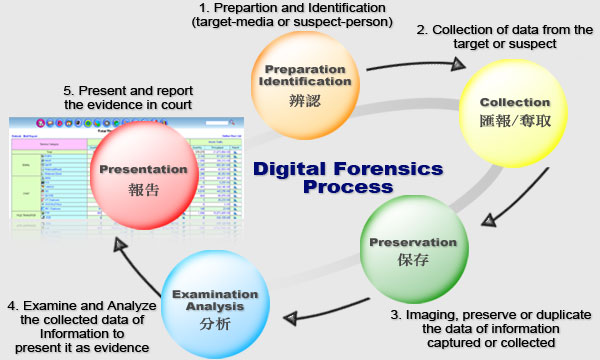

There are basically five steps of Digital Forensics proceses:

- Preparation and Identification

- Collection

- Preservation (Imaging and Duplication)

- Examination and Analysis

- Presentation

1.2.1 Preparation and Identification

Forensics Examiner or Investigator must have done all their Preparation before conducting the forensics case. This includes preparation of the tools and equipments necessary for conduction the job.

Forensics Examiner of Investigation must be able to identify the suspect - a person or a group of people (such as obtaining the suspect personal information which includes accommodation, job, travelling records etc.) or the target (such as the suspect's laptop, PC, hand phone, note book etc.).

1.2.2 Collection

Once the suspect or targer is identified, the next stage of digital forensics process is to collect the essential data and information which will be useful for examination and analysis.

This collection of required information can be from a physical device, such as a PC hard drive, USB drive etc. It can be an on going data transfer sessio such as data captured of collected from a LAN or WLAN networks.

1.2.3 Preservation, Imaging and Duplication

There is a need to preserve, image or duplicate the collected data or information to protect the collection in case of any damage and also for further analysis or reference.

When preserving of imaging this collected data of information, forensics investigator needs to ensure that there is no alteration of data duplicated. Therefore write block and hashing is normally required (MD5 or SHA1).

1.2.4 Examination and Analysis

Forensics examiner and investigator will have to examine and analyse the obtained data or information. This important information retrieve or obtained will be useful to be presented as evidence in court or to be use for further intelligence operation.

1.2.5 Presentation

The last stage of the digital forensic process is to report and present the findings and evidence in readable and recognizable format which may be useful in term of law and in court.

|

| Diagram 1.2: Digital Forensics Processes and Procedures |

|

|

1.3 Digital Forensics Field

The field of Digital Forensics has sub branches which include Computer Forensics, Network Forensics and Mobile Forensics.

1.3.1 Computer Forensics

Computer Forensics basically involving the recovering and obtaining of digital evidence in suspect computer (usually hard disk), analyzing and reporting the collected evidence in presentable order in court.

1.3.2 Network Forensics

Network Forensics normally involving the collection of network packets, analyzing recover the information in the packets back to original content format and reporting them in presentable order for court usage. The network packets collected may contain different service categories such as Email, Webmail, Instant Messaging, File Transfer (FTP and P2P), Telnet, Web Browsing (HTTP) etc.

1.3.3 Mobile Forensics

On the other hand, Mobile Forensics includes the collection and analyzing of digital evidence of a smart phone such as a PDA phone or Windows Mobile phone. Newly popular iPhone is another target. |

1.4 Introduction to Network Packets

On the Internet, the network breaks Internet contents such as Email message into parts of a certain size in bytes. These are the packets. Each packet carries the information that will help it get to its destination - the sender's IP address, the intended receiver's IP address, something that tells the network how many packets this Email message has been broken into and the number of this particular packet. The packets carry the data in the protocols that the Internet uses: Transmission Control Protocol/Internet Protocol (TCP/IP). Each packet contains part of the body of your message. A typical packet contains perhaps 1,000 or 1,500 bytes.

Depending on the type of network, packets may be referred to by another name:

Most network packets are split into three parts:

- Header - The header contains instructions about the data carried by the packet.

These instructions may include:

- Length of packet (some networks have fixed-length packets, while others rely on the header to contain this information)

- Synchronization (a few bits that help the packet match up to the network)

- Packet number (which packet this is in a sequence of packets)

- Protocol (on networks that carry multiple types of information, the protocol defines what type of packet is being transmitted: Email, Web, page, streaming video)

- Destination address (where the packet is going)

- Originating address (where the packet came from)

- Payload - Also called the body or data of a packet. This is the actual data that the packet is delivering to the destination. If a packet is fixed-length, then the payload may be padded with blank information to make it the right size.

- Trailer - The trailer, sometimes called the footer, typically contains a couple of bits that tell the receiving device that it has reached the end of the packet. It may also have some type of error checking. The most common error checking used in packets is Cyclic Redundancy Check (CRC). CRC is pretty neat. Here is how it works in certain computer networks: It takes the sum of all the 1s in the payload and adds them together. The result is stored as a hexadecimal value in the trailer. The receiving device adds up the 1s in the payload and compares the result to the value stored in the trailer. If the values match, the packet is good. But if the values do not match, the receiving device sends a request to the originating device to resend the packet.

| 4Bits |

8Bits |

16Bits |

24Bits |

|

| | | | | |

|

|

|

|

| Identification |

|

| Time to Live |

Protocol |

Header Checksum |

| Source IP Address |

| Destination IP Address |

|

| Data |

|

| Diagram 1.4: Sample IP Data Packet |

|

Example of IP Packets:

IP packets are composed of a header and payload. The IPv4 packet header consists of:

- 4 bits that contain the version, that specifies if it's an IPv4 or IPv6 packet,

- 4 bits that contain the Internet Header Length which is the length of the header in multiples of 4 bytes. Ex. 5 is equal to 20 bytes.

- 8 bits that contain the Type of Service, also referred to as Quality of Service (QoS),which describes what priority the packet should have,

- 16 bits that contain the length of the packet in bytes.

- 16 bits that contain an identification tag to help reconstruct the packet from several fragments,

- 3 bits that contain a zero, a flag that says whether the packet is allowed to be fragmented or not (DF: Dont't fragment), and a flag to state whether more fragments of a packet follow (MF: More Fragments,

- 13 bits that contain the fragment offset, a field to identify which fragment this packet is attached to,

- 8 bits that contain the Time to live (TTL) which is the number of hops (router, computer or device along a network) the packet is allowed to pass before it dies (for example, a packet with a TTL of 16 will be allowed to go across 16 routers to get to its destination before it is discarded),

- 8 bits that contain the protocol (TCP, UDP, ICMP, etc...)

- 16 bits that contain the Header Checksum, a number used in error detection,

- 32 bits that contain the source IP address,

- 32 bits that contain the destination address.

After those, optional flags can be added of varied length, which can change based on the protocol used, then the data that packet carries is added. An IP packet has no trailer. However, an IP packet is often carried as the payload inside an Ethernet frame, which has its own header and trailer.

|

1.5 Packet Sniffer Tools

A packet analyzer (also known as a network analyzer, protocol analyzer or sniffer, or for particular types of networks, an Ethernet sniffer or wireless sniffer) is computer software or computer hardware that can intercept and log traffic passing over a digital network or part of a network. As data streams flow across the network, the sniffer captures each packet and eventually decodes and analyzes its content according to the appropriate RFC of other specifications.

Some LAN/WLAN common packet sniffer and analyzer tools are:

- Wireshark (Previously known as Ethereal) www.wireshark .org

- tcpdump/WinDump www.winpcap.org

- Kismet www.kismetwireless.net

- Snoop www.snort.org

- EtterCap http://ettercap.sourceforge.net

- WildPacket - Omni Peek www.wildpackets.com

- Packet View Pro www.netscantools.com

- NetScout www.netscout.com

- PacketTrap www.packettrap.com

- NetStumbler www.netstumbler.com

- Effetech www.effetech.com

- Commvies www.tamos.com

- Colasoft Capsa www.colasoft.com

- PacketMon www.analogx.com

- KisMAC http://kismac.de

- Packetyze http://rbytes.net/software/packetyze-download/

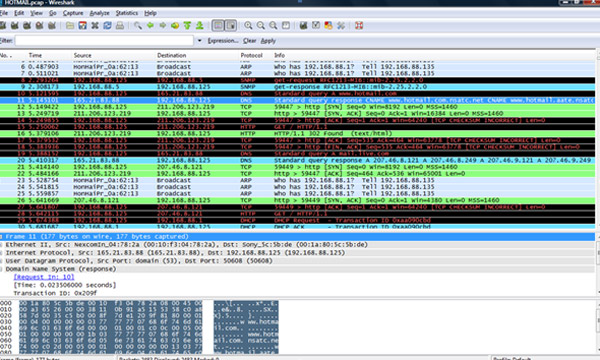

1.5.1 Wireshark

Wireshark is a free packet sniffer computer application. It is used for network troubleshooting, analysis, software and communications protocol development, and education. Originally named Ethereal, in May 2006 the project was renamed Wireshark due to trademark issues.

Wireshark is very similar to tcpdump, but it has a graphical front-end, and many more information sorting and filtering options. It allows the user to see all traffic being passed over the network (usually an Ethernet network but support is being added for others) by putting the network interface into promiscuous mode.

Wireshark uses the cross-platform GTK+ widget toolkit, and is cross-platform, running on various computer operating systems including Linux, Mac OS X, and Microsoft Windows. Released under the terms of the GNU General Public License, Wireshark is free software.

Wireshark is software the "understands" the structure of different networking protocols. Thus, it is able to display the encapsulation and the fields along with their meanings of different packets specified by different networking protocols. Wireshark uses pcap to capture packets, so it can only capture the packets on the networks supported by pcap.

- Data can be captured "from the wire" from a live network connection or read from a file that records the already-captured packets.

- Live data can be read from a number of types of network, including Ethernet, IEEE 802. 11, PPP, and loopback.

- Captured network data can be browsed via a GUI, or via the terminal (command line) version of the utility, tshark.

- Captured files can be programmatically edited or converted via command-line switches to the "editcap" program.

- Data display can be refined using a display filter.

- Plugins can be created for dissecting new protocols.

Wireshark's native network trace file format is the libpcap format supported by libpcap and WinPcap, so it can read capture files from applications such as tcpdump and CA NetMaster that use that format. It can also read captures from other network analysers, such as snoop, Network General's Sniffer, and Microsoft Network Monitor.

|

| Diagram 1.5.1: A screenshot of Wireshark |

|

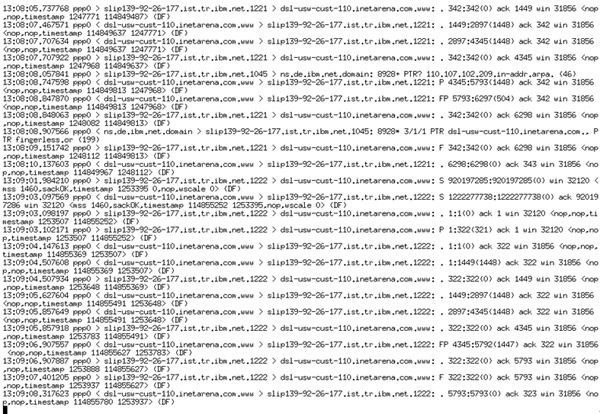

1.5.2 tcpdump/WinDump

tcpdump is a common packet sniffer that runs under the command line. It allows the user to intercept and dispaly TCP/IP and other packets being transmitted or received over a network to which the computer is attached. Distributed under the BSD license, tcpdump is free software.

tcpdump works on most Unix-like operating systems: Linux, BSD, Mac OS X, HP-UX and AIX among others. In those systems, tcpdump uses the libpcap to capture packets.

There is also a port of tcpdump for Windows called WinDump; this uses WinPcap, which is a port of libpcap to Windows.

In some Unix-like operating systems, a user must have super user privileges to use tcpdump because the packet capturing mechanisms on those systems require elevated privileges. However, the-Z option may be used to drop privileges to a specific unprivileged user after capturing has been set up. In other Unix-like operating systems, the packet capturing mechanism can be configured to allow non-privileged users to use it; if that is done, superuser privileges are not required.

The user may optionally apply a BPF-based filter to limit the number of packets seen by tcpdump; this renders the output more usable on networks with a high volume of traffic.

tcpdump is frequently used to debug applications that generate or receive network traffic. It can also be used for debugging the network setup itself, by determining whether all necessary routing is occurring properly, allowing the user to further isolate the source of a problem.

It is also possible to use tcpdump for the specific purpose of intercepting and displaying the communications of another user or computer. A user with the necessary privileges on a system acting as a router or gateway through which unencrypted traffic such as TELNET or HTTP passes can use tcpdump to view login IDs, passwords, the URLs and content of websites being viewed, or any other unencrypted information.

|

| Diagram 1.5.2: TCPDUMP Console Output |

|

1.5.3 Kismet

Kismet is a network detector, packet sniffer, and intrusion detection system for 802.11 wireless LANs. Kismet will work with any wireless card which supports raw monitoring mode, and can sniff 802.11a, 802.11g traffic. The program runs under Linux, FreeBSD, NetBSD, OpenBSD, and Mac OS X. The client can also run on Windows, although, aside from external drones, there is only one supported wireless hardware available as packet source. Distributed under the GNU General Public License, Kismet is free software.

Kismet is unlike most other wireless network detectors in that it works passively. This means that without sending any loggable packets, it is able to detect the presence of both wireless access points and wireless clients, and associate them with each other.

An explanation of the headings displayed in Kismet. Kismet also includes basic wireless IDS features such as detecting active wireless sniffing programs including NetStumbler, as well as a number of wireless network attacks.

Kismet has the ability to log all sniffed packets and save them in a tcpdump/Wireshark or Airsnort compatible file format.

To find as many networks as possible, kismet supports channel hopping. This means that it constantly changes from channel to channel non-sequentially, in a user-defined sequence with a default value that leaves big holes between channels (for example 1-6-11-2-7-12-3-8-13-4-9-14-5-10). The advantage with this method is that it will capture more packets because adjacent channels overlap.

Kismet also supports logging of the geographical coordinates of the network if the input from a GPS receiver is additionally available.

1.5.4 Ettercap

Ettercap is a Unix and Windows tool for computer network protocol analysis and security auditing. It is capable of intercepting traffic on a network segment, capturing passwords, and conducting active eavesdropping against a number of common protocols. It is free open source software, licensed under the terms of the GNU General Public License.

Ettercap supports active and passive dissection of many protocols (including ciphered ones) and provides many features for network and host analysis. Ettercap offers four modes of operation:

- IP-based: packets are filtered based on IP source and destination.

- MAC-based: packets are filtered based on MAC address, useful for sniffing connections through a gateway.

- ARP-based: uses ARP poisoning to sniff on a switched LAN between two hosts (full-duplex).

- PublicARP-based: uses ARP poisoning to sniff on a switched LAN from a victim host to all other hosts (half-duplex).

In addition, the software also offers the following features:

- Character injection into an established connection: characters can be injected into a server (emulating commands) or to a client (emulating replies) while maintaining a live connection.

- SSH1 support: the sniffing of a username and password, and even the data of an SSH1 connection. Ettercap is the first software capable of sniffing an SSH connection in full duplex.

- HTTPS support: the sniffing of HTTP SSL secured data--even when the connection is made through a proxy.

- Remote traffic through a GRE tunnel: the sniffing of remote traffic through a GRE tunnel from a remote Cisco router, and perform a man-in-the-middle attack on it.

- Plug-in support: creation of custom plugins using Ettercap's API.

- Password collectors for: TELNET, FTP, POP, IMAP, rlogin, SSH1, ICQ, SMB, MySQL, HTTP, NNTP, X11, Napster, IRC, RIP, BGP, SOCKS 5, IMAP 4, VNC, LDAP, NFS, SNMP, Half-Life, Quake 3, MSN, YMSG

- Packet filtering/dropping: setting up a filter that searches for a particular string (or hexadecimal sequence) in the TCP or UDP payload and replaces it with a custom string/sequence of choice, or drops the entire packet.

- OS fingerprinting: determine the OS of the victim host and its network adapter.

- Kill a connection: killing connections of choice from the connections-list.

- Passive scanning of the LAN: retrieval of information about hosts on the LAN, their open ports, the version numbers of available services, the type of the host (gateway, router or simple PC) and estimated distances in number of hops.

- Hijacking of DNS requests.

Ettercap also has the ability to actively or passively find other poisoners on the LAN.

|

1.6 Introduction to Packet Reconstruction Tool

Packet reconstruction tool or solution allows the raw data packets payload content to be re-assembled and displayed in original and exact content format as it has been seen on users PC screen. There are basically two categories of packet reconstruction tool. One is the online or real-time reconstruction tool. Another is the offline reconstruction tool. Decision Computer Group is the leading designer and manufacturer that come out with packet reconstruction solution such as E-Detective, E-Detective Decoding Centre (EDDC) and Wireless-Detective. E-Detective is Ethernet LAN (Wired) real-time Internet reconstruction system, which allows the playback of various Internet activities. EDDC is the offline reconstruction system which allows investigators to conduct investigation on different cases with the raw data pre-captured. Wireless-Detective is the WLAN Internet real-time (on-the-fly) capturing and reconstruction solution.

Various Internet protocols that can be reconstructed by Decision Computer Group reconstruction tools include:

- Email (POP3, IMAP, SMTP)

- Webmail (Yahoo Mail, Windows Live Hotmail, Gmail, Giga Mail, Hinet, Sina Mail etc.)

- Instant Messaging (Yahoo, MSN, ICQ, QQ, IRC, UT Chat Room, Google Talk, Skype)

- File Transfer (FTP – Upload/Download)

- File Transfer (P2P – BitTorrent, FastTrack, eMule/eDonkey etc.)

- HTTP Web (Link, Content, Reconstruct, Upload/Download, Video Streaming)

- Online Games (Ragnarok Online, Kartrider, Mapple Story etc.)

- Telnet

- VoIP (SIP and H.323)

|

|

|

|

|

|

|

|

| Module 2 : HTTP Network Packet Analysis |

2.1 Introduction to HTTP Protocol

Hypertext Transfer Protocol (HTTP) is an application-level protocol for distributed, collaborative, hypermedia information systems. Its use for retrieving inter-linked resources led to the establishment of the World Wide Web.

HTTP development was coordinated by the World Wide Web Consortium and the Internet Engineering Task Force (IETF), culminating in the publication of a series of Requests for Comments (RFCs), most notably RFC 2616 (June 1999), which defines HTTP/1.1, the version of HTTP in common use.

HTTP is a request/response standard of a client and a server. A client is the end-user, the server is the web site. The client making a HTTP request—using a web browser, spider, or other end-user tool—is referred to as the user agent. The responding server—which stores or creates resources such as HTML files and images—is called the origin server. In between the user agent and origin server may be several intermediaries, such as proxies, gateways, and tunnels. HTTP is not constrained to using TCP/IP and its supporting layers, although this is its most popular application on the Internet. Indeed HTTP can be "implemented on top of any other protocol on the Internet, or on other networks." HTTP only presumes a reliable transport; any protocol that provides such guarantees can be used.”

Typically, an HTTP client initiates a request. It establishes a Transmission Control Protocol (TCP) connection to a particular port on a host (port 80 by default; see List of TCP and UDP port numbers). An HTTP server listening on that port waits for the client to send a request message. Upon receiving the request, the server sends back a status line, such as "HTTP/1.1 200 OK", and a message of its own, the body of which is perhaps the requested resource, an error message, or some other information.

Resources to be accessed by HTTP are identified using Uniform Resource Identifiers (URIs) (or, more specifically, Uniform Resource Locators (URLs)) using the http: or https URI schemes.

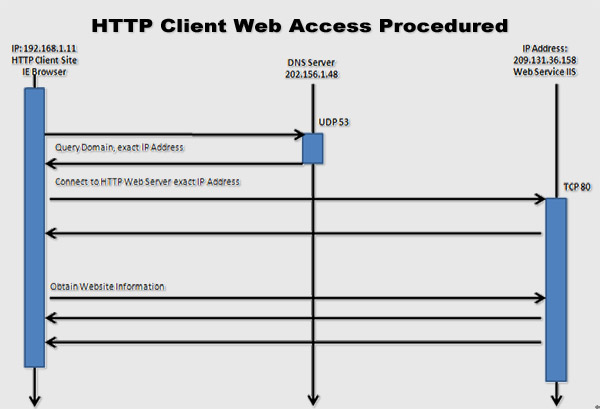

2.1.1 HTTP Client Connection

The most common communication protocol is HTTP web access services. HTTP connection normally utilizes port TCP-80 as communication protocols.

Normally, HTTP web connection services can be divided into 2 services:

- Web-Client: Normal web accesses from client end where user open a web browser, input URL and access the website. Only contains DNS and HTTP packets.

- Web-Master: Remote users connect or access your web site/server. Only contains HTTP packets.

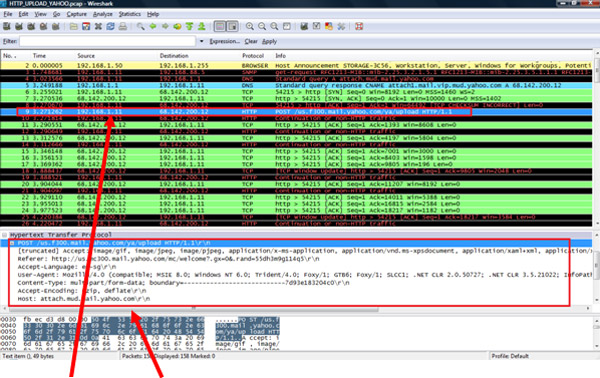

2.1.1.1 HTTP Client Web Access Procedures

|

Diagram 2.1.1.1: HTTP Client Web Access Procedures |

|

2.1.1.2 HTTP Instructions/Commands

|

Descriptions |

| GET |

The GET method means retrieval of whatever information (in the form of an entity) is identified by the Request-URI. If the Request-URI refers to a data-producing process, it is the produced data which shall be returned as the entity in the response and not the source text of the process, unless that text happens to be the output of the process. |

| POST |

The POST method is used to request that the origin server accept the entity enclosed in the request as a new subordinate of the resource identified by the Request-URI in the Request-Line. |

| HEAD |

The HEAD method is identical to GET except that the server MUST NOT return a message-body in the response. The meta information contained in the HTTP headers in response to a HEAD request SHOULD be identical to the information sent in response to a GET request. This method can be used for obtaining meta information about the entity implied by the request without transferring the entity-body itself. This method is often used for testing hypertext links for validity, accessibility, and recent modification. |

| OPTIONS |

The OPTIONS method represents a request for information about the communication options available on the request/response chain identified by the Request-URI. This method allows the client to determine the options and/or requirements associated with a resource, or the capabilities of a server, without implying a resource action or initiating a resource retrieval. |

| TRACE |

The TRACE method is used to invoke a remote, application-layer loop-back of the request message. The final recipient of the request SHOULD reflect the message received back to the client as the entity-body of a 200 (OK) response. |

| CONNECT |

This specification reserves the method name CONNECT for use with a proxy that can dynamically switch to being a tunnel (e.g. SSL tunnelling). |

| DELETE |

The DELETE method requests that the origin server delete the resource identified by the Request-URI. |

|

|

2.1.1.3 HTTP Response Code

|

Descriptions |

| 100 |

Continue |

| 101 |

Switching Protocol |

| 200 |

Command OK |

| 201 |

Created OK |

| 202 |

Accepted OK |

| 404 |

Not Found |

| 405 |

Method Not Allowed |

| 406 |

Not Acceptable |

| 407 |

Proxy Authentication Required |

| 408 |

Request Time Out |

| 409 |

Conflict |

| 410 |

Gone |

| 411 |

Length Required |

| 412 |

Precondition Failed |

| 413 |

Request Entity Too Large |

| 414 |

Request URL Too Large |

| 415 |

Unsupported Media Type |

| 500 |

Internal Server Error |

| 501 |

Not Implemented |

| 502 |

Bad Gateway |

| 503 |

Service Unavailable |

| 504 |

Gateway Timeout |

| 505 |

HTTP Version Not Supported |

|

|

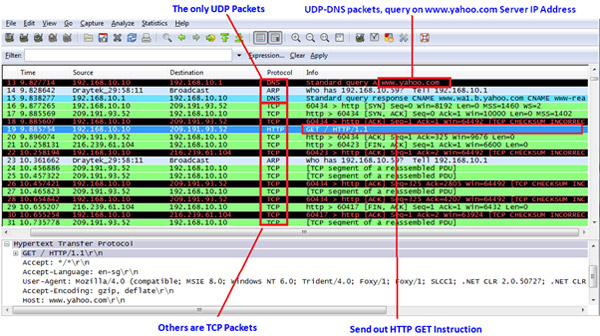

2.1.1.4 HTTP Client Web Access Packet Analysis

|

Diagram 2.1.1.4.1: HTTP Client Web Access Sample Packet Analysis |

|

|

Diagram 2.1.1.4.2: HTTP Client Web Access - Content Sample Packet Analysis |

|

2.1.2 HTTP Host/Server Connection

2.1.2.1 HTTP Host/Server Service

Majority of Internet services depends on HTTP (Hyper Text Transfer Protocol) to complete the task. HTTP Protocol has been specified by RFC1945. Besides providing Client’s Request for basic Web surfing, HTTP Internet services also provides different Web query and access functions such as Webmail service, Web database service, Web storage services and even organization ERP user interfaces. The HTTP Internet services have brought in tremendous Internet based e-Businesses.

In previous section, we have talked about HTTP Client Connection (from the perspective of client or Web-Client). Here we will look into it through HTTP Host/Server Connection (Web-Master) angle.

2.1.2.2 HTTP Host Equipment Type

Normally there will have three types of Host Equipments on the Internet as explain below.

Type 1: Internet Service Site

This refers to host/server site, known as Server-site. This equipment type provides different Internet services, which basically waiting for clients/users to connect to it, known as in Listen stage. Common type of server includes Web Server, Mail Server, Database Server and DNS Server.

Type 2: Internet Client Site

This refers to client/user site, known as Client-site. This equipment types normally are users’ computer or PC, which basically request for different services through the Internet.

Type 3: Internet Transportation Site

This is refers to Transport-site. It provides the service for delivering Internet packets between Server and Client or vice versa. It follows OSI-Layer in providing the services.

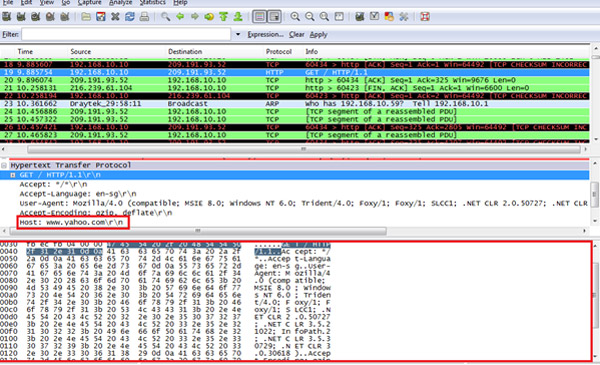

2.1.2.3 HTTP Host Operation and Packet Characteristics

|

Diagram 2.1.2.3: HTTP Host/Server Operation |

|

HTTP Host/Packet Characteristic

- No website ping (ICMP) packet

- No DNS-Query packet

- Most common HTTP instructions/commands are GET and POST

Most common HTTP response code is 2xx and 3xx |

2.3 HTTP Upload

File Upload or HTTP upload allows you to send files to a web server using standard forms. In the same way that there are form elements that allow you to enter text and others that allow you to choose items from a list there is a form element that allows you to choose a file.

Like so many useful elements of HTML, File Upload was not supported in Internet Explorer 3.0 but was soon afterwards added in version 3.02. It is available in Netscape Navigator from version 2.02 onwards. So the great advantage of HTTP upload is that (as much as can be said for anything on the web) upload facilities are pretty much standard on any browser that will come to your web site.

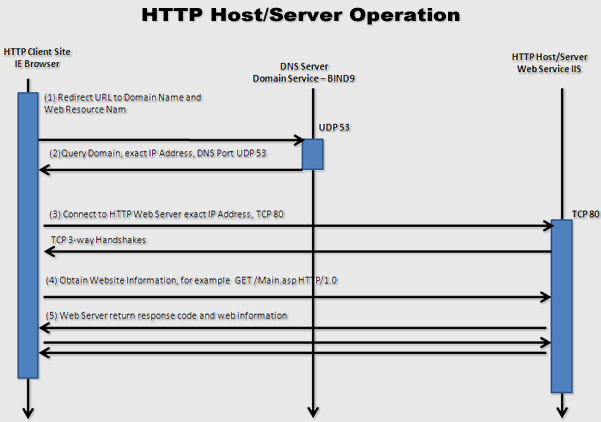

2.3.1 HTTP Upload Sample Packet Analysis

|

HTTP POST Command – HTTP Upload a file to Host attach.mud.mail.yahoo.com |

|

|

2.4 HTTP Download

This refers to file download or HTTP download from a Web Server. All Web Browsers support this features of download or obtaining a file or information from the Web Server.

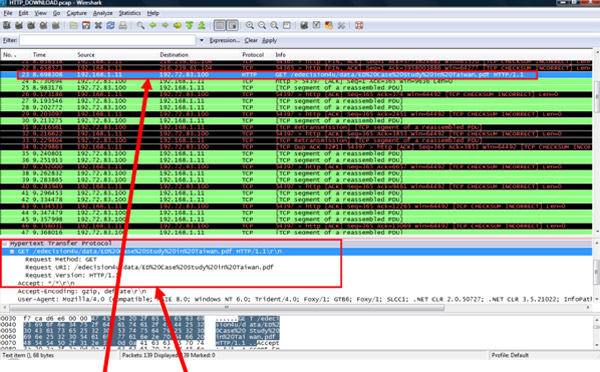

2.4.1 HTTP Download Sample Packet Analysis

|

HTTP GET – HTTP Download a file name “ED Case Study in Taiwan.pdf |

|

|

|

|

|

|